In 2026, a notable shift emerged in the embodied AI sector: a group of companies focused on models and algorithms began proactively acquiring robotics businesses and complete system development teams.

In April, Skild AI, a large-scale embodied AI model and unicorn company, secured Zebra’s robotics automation business; Amazon also integrated the humanoid robotics startup Fauna Robotics into its portfolio; earlier, autonomous driving firm Mobileye acquired humanoid robotics company Mentee Robotics for approximately $900 million.

Skild AI Specializes in Developing a “Universal Embodied Brain”

When viewed collectively, these transactions can no longer be regarded as isolated incidents. These acquisitions share a common characteristic—the dominant players predominantly originate from the “brain-side” of the ecosystem. Their original role was to endow robots with perception, decision-making, and learning capabilities; now, they are directly integrating into hardware, products, and application scenarios.

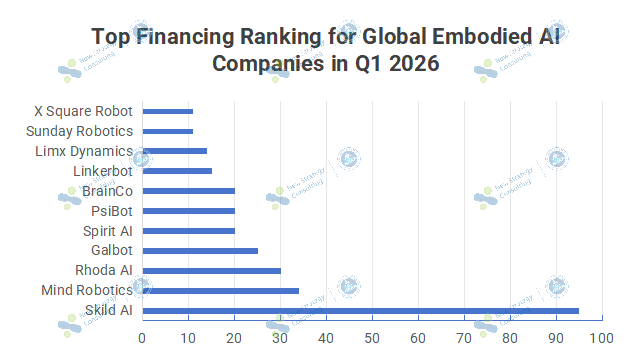

This change did not occur suddenly. Over the past year, funding in the field of embodied AI has shown a clear bias: more capital has flowed toward companies specializing in models, data, and embodied brains.

Capital Is Betting on the “Embodied Brain” and the “Data Closed Loop”

By 2026, this preference began to manifest more clearly as a shift in control rights. Capital’s activities evolved from “investing money in brains” to “empowering brains with physical forms.” As model companies moved beyond being mere upstream capability providers to directly engaging in complete systems and application scenarios, the previously stable industrial division of labor was being fundamentally reshaped.

I. Why Does the “Brain” Require a “Body”?

Previously, embodied AI predominantly exhibited a “tiered collaborative” architecture: hardware manufacturers handled system integration and execution capabilities, while model developers provided perception and decision-making functions. The two components interfaced through APIs to progressively deploy solutions in specific scenarios. The financing dynamics mirrored this structure, with model and data companies commanding higher valuations, whereas hardware manufacturers bore greater engineering and delivery responsibilities.

On the surface, this represents a collaborative industry partnership where each party leverages its strengths. The foundation model company avoids the complexities of hardware manufacturing and supply chain pressures, while the robotics company can enhance product intelligence by utilizing an external “brain.”

However, as the industry entered the phase of practical implementation, this division of labor began to reveal its shortcomings.

For brain companies, providing only algorithms and models, make it difficult to obtain usage data for the final product. Information such as when robots make errors, which actions fail, and what capabilities users truly need is generated at the hardware level and on-site. Without direct access to real-world data, model iteration is disrupted.

For “body” companies, the issue is equally evident. Hardware manufacturing capabilities remain crucial, but without continuous learning and task generalization abilities, the entire system can easily become merely an engineering delivery project. It may perform specific fixed actions but struggles to evolve as data accumulates; it may excel in a particular scenario but finds it difficult to be rapidly replicated across diverse tasks.

Thus, the model of “empowering hardware” began to shift toward “owning hardware.”

The underlying logic is straightforward: embodied AI is neither purely a software business nor solely a hardware business. What holds true value is a closed-loop system—where robots enter specific scenarios, generate data, which feeds back into the model; the model then refines its actions and task capabilities, ultimately driving continuous product evolution.

Within this closed loop, hardware is no longer merely a vehicle for model implementation, but rather serves as a data entry point, delivery terminal, and scenario connectivity hub. Whoever controls the hardware gains easier access to real-world data; whoever controls the data can more effectively train a more powerful “brain.”

Therefore, brain companies have begun expanding into the hardware sector, essentially aiming to achieve a closed-loop system.

What they need is not merely a robot capable of running models, but a comprehensive system that continuously generates data, possesses validation capabilities, and can operate in customer environments. Only by integrating the “brain” and “body” into a unified framework can model capabilities transition from demonstration to product implementation, and from laboratory settings to real-world applications.

This is precisely why ‘owning hardware’ is becoming increasingly important.

When embodied AI is still in the proof-of-concept stage, its algorithmic capabilities are sufficient to support valuation and potential growth. However, as the industry enters the commercialization phase, standalone model capabilities become insufficient. Those who can integrate models, hardware, data, and application scenarios into a closed loop are more likely to gain dominance in the next stage.

II. Why is the “body” beginning to lose its dominance?

As the “brain” begins to integrate information downward, the role of the “body” also undergoes changes.

In recent years, the focus of the humanoid robotics industry has consistently centered on physical capabilities. Systems with stronger joints, more stable motion control, and greater bipedal flexibility have typically garnered more attention. Numerous robotics companies compete primarily on these physical attributes: optimizing structural designs, developing proprietary actuators, enhancing motion performance, and leveraging engineering expertise to deploy solutions in various applications.

However, as model capabilities have rapidly improved, the industry’s value focus has begun to shift.

An increasingly evident trend is that hardware is gradually becoming “infrastructure-like.” Many capabilities once regarded as core competitive barriers—such as motion control, mobility, and fundamental grasping capabilities—are becoming increasingly standardized. Particularly in the fields of humanoid and mobile robots, the underlying hardware architectures are converging, and supply chain maturity is rapidly improving.

This means that relying solely on inherent capabilities makes it difficult to establish sufficiently high barriers over the long term.

For many complete machine manufacturers, the greater pressure stems from another issue: they are beginning to lose control over system specifications.

In the past, robotics companies determined the appearance of products, the scenarios in which they would operate, and the tasks they would perform; models and algorithms primarily served as supporting capabilities. However, today, an increasing number of task logics are being governed by the “brain.” Core capabilities—such as how robots understand their environment, plan actions, learn tasks, and iterate continuously—are increasingly dependent on model systems.

When the “brain” becomes the core of the system, the “body” is more prone to degenerate into a mere execution terminal.

This transformation has already occurred once in the autonomous driving industry. In its early stages, many companies competed primarily on sensors, hardware solutions, and vehicle platforms; later, it was data, algorithms, and system capabilities that truly shaped the industry landscape. Hardware remains crucial, but the dominant influence has begun shifting toward software.

A similar trend is emerging in embodied AI.

This is precisely why an increasing number of robotics companies are adopting a reverse approach to enhance their “brain”: developing proprietary VLA systems, establishing data platforms, and strengthening model training capabilities. They have realized that possessing only hardware capabilities is insufficient to gain a competitive edge in the next phase of development.

In the future, the industry may gradually split into two types of companies.

One category of companies possesses expertise in models, data, and system capabilities, responsible for defining how robots comprehend the world and execute tasks; another category provides standardized ontologies, actuators, and engineering capabilities, operating more closely within the supply chain framework.

The “body” certainly will not disappear. Robots must consistently operate in the real world, performing movements, grasping actions, executing maneuvers, and meeting complex operational conditions and safety requirements.

In the new industrial landscape, it is the ‘brain’ that truly determines the pace of robot evolution.

III. Whoever can create a closed loop defines the robot

The extension of the “brain” to the “body” does not imply that hardware becomes less critical. On the contrary, for robots to truly operate in factory, warehouse, household, and public service scenarios, they still require a stable ontology, reliable actuators, a mature supply chain, and long-term engineering debugging.

The change lies in the fact that the importance of hardware is being redefined.

In the past, hardware primarily determined whether a robot could move; moving forward, hardware must address another critical question: whether it can support the continuous evolution of the brain.

This imposes new requirements on robotics companies. They must not only demonstrate that their robots run fast, jump high, and perform complex maneuvers, but also prove that these robots can consistently generate high-quality data, support model training, and iteratively refine performance in real-world tasks. In other words, robots are no longer merely terminals for executing actions; they must also serve as gateways for data collection, capability validation, and model evolution.

This is precisely the fundamental reason why “brain-centric” enterprises have begun integrating hardware solutions.

For them, real-world data is of paramount importance. Embodied AI models cannot evolve solely through internet texts, images, or videos; they must engage with the physical world—interacting with objects, understanding spatial relationships, handling failures, and refining their actions. Every failed grasp, every path deviation, and every task interruption becomes part of the model’s iterative refinement.

Whoever possesses more authentic robots will more readily acquire substantial real-world data; whoever controls more such data will have greater opportunities to train more advanced embodied brains.

Therefore, future competition among embodied AI companies may no longer revolve solely around individual capabilities, but rather around closed-loop capabilities.

This closed-loop system comprises four key components: hardware integration into the scenario, scenario-driven data generation, data utilization for model training, and the model’s subsequent enhancement of robotic capabilities. The absence of any single component would significantly hinder a company from establishing sustainable competitive advantages.

This also means that industry dominance will concentrate among system-level companies.

Focusing solely on models without hardware and real-world scenarios makes it difficult to validate their capabilities; concentrating only on ontologies without data and models hinders continuous evolution. Truly competitive companies must integrate the “brain,” “body,” and “scenarios” to form a self-reinforcing system.

Thus, the saying ‘brain companies are increasingly merging body manufacturers’ appears to be mere mergers and acquisitions on the surface, but in reality signifies a shift in control over the industrial chain.

In the future, what determines the value of a robotics company may no longer be solely the number of machines it sells, but rather its ability to accumulate data, train models, enhance capabilities through these machines, and replicate such capabilities across diverse forms and scenarios.

From May 21 to 22, 2026, the Second Global Unmanned Forklift Application Scenario Competition & the Material Handling and Sorting Challenge for Embodied Wheeled Humanoid Robots 2026 will be held in Hangzhou by China Mobile Robot Industry Alliance (CMRA) and Humanoid Robot Scene Application Alliance (HRAA) Building upon the existing unmanned forklift category, this event introduces a dedicated wheeled humanoid robot track for the first time, addressing the industry’s practical needs for embodied intelligence applications and further bridging technological innovation with industrial implementation.